Student Experiment Flights 3/2/24

On Saturday morning at 9 am on March 2nd, 2024, we set up for student experiment payload practice flights in preparation for the total solar eclipse. This marked flights #86 and #87 for the NASA Nebraska Space Grant team.

We did not fly any of the the equipment for the NEBP streaming video balloon. Because we were not using the vent system and the Iridium, Derrick showed the students how to prepare the neck of the balloon “old school.” We have a piece of PVC in the neck of the balloon and secure the lines to the parachute with zip ties. We also used a simple fill tube rather than the quick connect version for filling the balloon through the vent.

For the tracking on the first balloon, we used the StratoStar SatCom system and an APRS beacon. This also had the Insta360 camera for testing and a solar panel experiment on board.

This was the second balloon with two experiment payload boxes – a UV sensor experiment and one prepared with paint in balloons for a combination engineering/art project. For the tracking on the second balloon, we used two SPOT locators, one provided by the national NEBP and one from the AXP club.

The first balloon was recovered in a suburb in Papillion by Derrick and Kendra. It landed not far from the sidewalk and was just a few miles from Michael and Kendra’s house.

The second balloon was recovered by a student recovery team in a field in Iowa.

NEBP Annular Eclipse 10/14/23

NEBP Tethered Flight 7/22/23

As a part of the Nationwide Eclipse Ballooning Project (NEBP), the Nebraska team continues to test the equipment we will be using to stream live video during the next two solar eclipses.

We decided to do a tethered balloon test as a step beyond bench testing equipment and not quite to a full flight including release and recovery of the payloads.

The weather was warm and clear with just a little breeze.

We used a large balloon left over from the 2017 Eclipse Ballooning project. It was valuable to the team to practice balloon filling techniques in an unhurried environment.

We had a reel used for fishing to tether the balloon. It was extended about 100 ft. into the air for our testing purposes.

Walking the balloon out.

The vent open and close commands were successful and we had a successful cut down.

The website that pulls the coordinates from our GPS payload was not functioning, so our base station did not automatically track the payloads.

We had our new grid antenna set up, but have yet to test the RFD900 payload connection and software.

As the payloads dropped from about 80 ft. the parachute did not fully deploy and the 3D printed vent was damaged as well as some parts of the styrofoam payload boxes. We will need to fix those before our actual test flight.

NEBP Workshop 5/26/23

Astronomy Scaled Models

Besides the traditional solar system scaled model, you can use other models to compare sizes of astronomical objects.

For example, the following images were of a scaled model originally made to be the Sun (tiny yellow ball) and the Sun when it turns into a red giant (big red ball). There is a tiny magnet in the small yellow ball and another one in the side of the big red ball to keep them together.

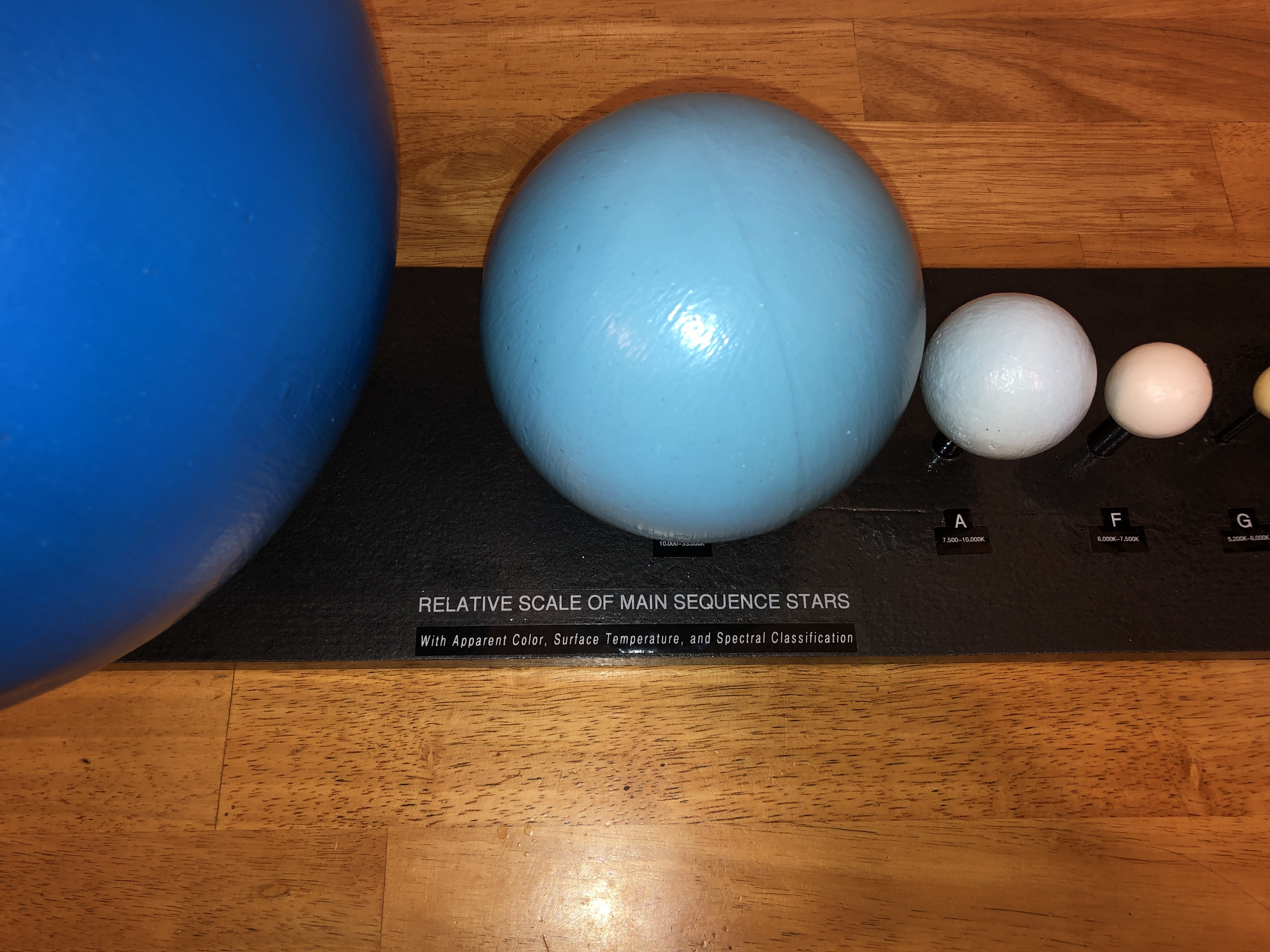

This next model is of the relative sizes of the main sequence stars.

The following is an artistic interpretation of the Milky Way, printed on both sides with cotton batting inside and coated with a waterproof sealer to make it solid. This makes it possible to feel the shape of the spiral arms.

3D Astronomy Prints

Comet 67P/Churyumov–Gerasimenko 3-D printed in white.

Link to 3D Print file: https://sci.esa.int/web/rosetta/-/54728-shape-model-of-comet-67p

The Moon that I had printed was not as good of a quality of print. It was smaller and you can see the layers of string. Also the bottom was rough because the raft (base) it needed to print had to be cut off.

Uncovering Ancient Patterns in Space Rocks

Tips for Teaching Physics and Astronomy Online

This is for my professor and teacher friends who may be teaching online for the first time because of the school closures. I’ve been teaching online astronomy, astronomy lab, and algebra-based physics classes for well over a decade. I also am a lead online faculty member for the physical sciences for my school and a Quality Matters master reviewer. Here are some off-handed suggestions for the current situation based on my experiences.

1. Cut yourself some slack. Most people take at least a semester to convert their classes to an online environment. You may only have a few days to adjust. Take a deep breath and take it day-by-day or week-by-week or module-by-module.

2. Cut your colleagues some slack. Many of them did not want to teach online and are forced to now by circumstance. Create a community to help each other with ideas and tips.

3. Cut your students some slack. They may not be used to online learning which requires them to bear more responsibility for their learning. Over-communicate with them. Post announcements for the class. Push these to email or text if they have that set up. Put dates on the calendar. These will usually show up in their “to do” lists on their college home page.

4. Be clear about expectations. Tell them how often they are expected to post to discussions. Give them examples of an excellent discussion post, an ok discussion post, and a poor discussion post (like, “I agree.” or “Good post.”). If someone misses homework or does not post for a week, send an email asking if they are ok or if they need help. You can use tools in the LMS (Learning management system like Canvas or Blackboard) to focus on those who do not log in for a few days. Don’t think of this as “hand-holding” but this is how you let online students know that they are missed and maybe they are having major life issues and don’t want to bother you or are too embarrassed.

5. Set up a discussion for students to post questions if you are not immediately available and encourage them to help each other. Even though they are no longer face-to-face, let them know that we are all in this together and we want everyone to be successful in their learning.

6. Zoom may be a great option for doing meetings or recording lectures, but the free version may be overloaded soon, so you might look into alternative methods. I have physics students use their cell phone cameras to take photos of their written work to be submitted rather than trying to type it into an equation editor and I sometimes have them video their problem solving methods (usually by talking over their work as shown on a sheet of paper and explaining) and upload that in a discussion to share with other students. These are usually short and the video files are small enough to not be too much of an issue. Ask students for suggestions on what apps or tools to use for communication and learning. Astronomy education by TikTok? Why not? You might learn something too.

7. Realize that you are not going to be able to effectively reproduce everything that you do in class in the same way online. There are ways to set up groups in the LMS try to do interactive lecture tutorials or think-pair-share, but I think it is more hassle than it’s worth. My community college students have kids, have jobs, and lots of things going on in their lives. I cannot expect everyone in the class to log in at a certain day and time to participate in synchronous activities. So, I use asynchronous activities exclusively.

8. If you have a small and dedicated class, you could set up a Zoom meeting or a chat in the LMS during the time you would normally meet.

9. If you have PowerPoint presentations, you could do a presentation video in Presenter Mode and use screen capture just choose an area of the computer so you have access to your notes and access to the next slide without it recording that extraneous information and upload it to YouTube. This also makes it a smaller video and slightly smaller resolution.

10. I would record only short lectures on important topics or points or put breaks in the Presentation so students can navigate it more easily. I would suggest no more than 5-7 minutes for each topic. For accessibility purposes if these classes were to meet Quality Matters standards, all of these videos would eventually need to be closed captioned or include a transcript for students who are hard-of-hearing. Perhaps you can get ahead of the game and write a script, follow it and upload that text along with the video.

11. If you make videos, make sure the lighting is decent and the mic level is appropriate.

12. I suppose people will be most nervous about testing in the online environment. We have some math classes that require proctoring for certain tests and I suspect that our institution will be more lax on that requirement during the if the campuses and testing centers are closed. Multiple choice and true-false questions can be graded automatically. Essays will have to be graded by hand. If you do some computational problems, there are tools to randomize the values if you code the equations so every student has a different answer. You can set a time limit for online tests to reduce the answering by Google, but then you will have to made accommodations for individuals who require additional testing time.

Good luck and may the odds be ever in your favor.

MCC Launch 4/27/19

Cosmic Fireworks: Tips for Hosting Large Star Parties

Space Crafts #5 – Near Space Ukulele

Nebraska Star Party Launch – 8/7/18

Eureka! STEM Launch 2018

MCC Launch – 4/21/18

Putty Drop Experiment

Total Solar Eclipse 8/21/17 – Part 2

Total Solar Eclipse 8/21/17

Front Page of Omaha World Herald – 8/12/17

Aerospace Educators Workshop 2017 – Conclusions

Read the experiment conclusions written by the participants of the Aerospace Educators Workshop 2017.